AI Digest — Week of Feb 27

2026-02-27

Claude Opus 4.6 launches with native agent teams in Claude Code

Anthropic released Opus 4.6 on Feb 5 with a 1M-token context window and first-class "agent teams" in Claude Code — multiple AI agents working in parallel on different parts of a codebase, coordinating autonomously. On Terminal-Bench 2.0, it leads all frontier models on agentic coding. Directly applicable to any complex Pulse or SuperAudit feature work that can be split across parallel subtasks.

Claude Code: subagents, auto-memory, and smarter permissions

Claude Code now ships a formal subagents system — Markdown files with YAML frontmatter that define specialized agents with their own system prompts and scoped tool access. This week also added auto-save to persistent memory, a /copy picker for code blocks, and smarter per-subcommand always-allow prefix logic. The subagent definitions map directly to the custom agents already in this project's .claude/ directory.

Claude Sonnet 4.6: near-Opus performance at Sonnet pricing

Sonnet 4.6 is now the default Claude model — $3/MTok input, $15/MTok output — reaching close to previous Opus-class performance on office tasks and coding. OSWorld computer-use score jumped from under 15% in late 2024 to 72.5% today. Any Pulse or SuperAudit API calls on Opus 4.5 should be benchmarked — a downgrade to Sonnet 4.6 would cut AI costs significantly with minimal quality loss on most tasks.

Gemini 3.1 Pro preview: stronger reasoning for complex tasks

Google released Gemini 3.1 Pro in preview on Feb 26, designed for multi-step problem-solving where "a simple answer isn't enough." Significantly improved reasoning benchmarks over Gemini 3 Flash. Available via Google AI Studio, Vertex AI, Gemini CLI, and Gemini API. Both Pulse and SuperAudit use Gemini — 3.1 Pro is the right model for deeper analysis (audit report generation, email classification at scale) where reasoning depth matters more than throughput cost.

Figma MCP server: bidirectional design-to-code now in beta

Figma's MCP server is in beta and connects with Claude Code directly. The get_design_context tool pulls layouts, styles, and component data from Figma files into LLM context; generate_figma_design goes the other direction — turns a live running UI into editable Figma frames. Dashboard component work in Pulse or SuperAudit can skip the manual design-spec step entirely — layout comes from Figma, implementation goes back automatically.

Pencil.dev MCP: canvas-to-React via Claude Code

Pencil.dev's MCP server runs alongside Claude Code and gives it tools to read .pen canvas files and generate production-ready React/HTML/CSS from visual layouts. Accepts Figma copy-paste preserving spacing and layer hierarchy. For frontend-heavy iterations on GBP Hub or upcoming Pulse dashboard features, the Pencil → Claude Code loop substantially shortens the design-to-PR cycle.

OpenAI Responses API: hosted web search, file search, remote MCP, and connectors

OpenAI's Responses API now bundles web search, file search, computer use, code interpreter, and remote MCP as hosted built-in tools — no client-side tool-execution code required. OAuth-gated connectors add access to Google Drive, Dropbox, and Gmail via connector_id. WebSocket transport cuts agent round-trip latency 20–40%. Relevant competitive context for any SuperAudit evaluation that benchmarks against OpenAI's agentic API surface.

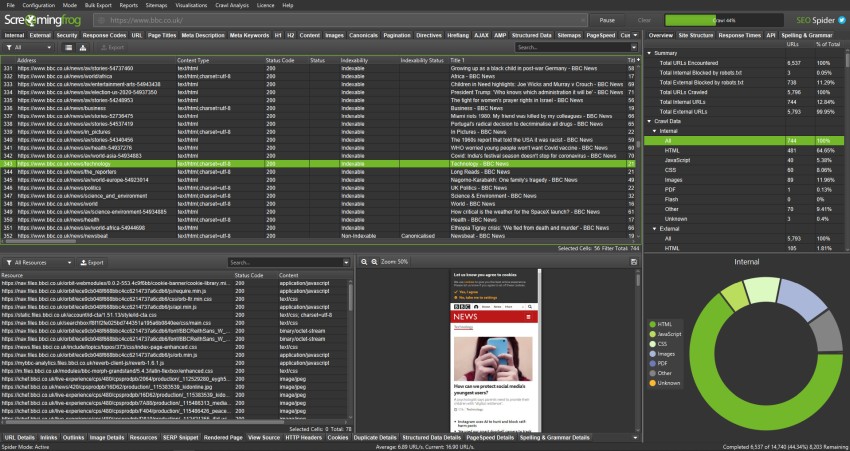

Screaming Frog 23.3: Lighthouse 13 audit overhaul and Googlebot 2MB limit

Versions 23.0/23.3 update the Lighthouse integration to match Lighthouse 13's renamed and retired audits, and enforce Googlebot's updated file size limit of 2MB (down from 15MB) with two new issue types: HTML Document Over 2MB and Resource Over 2MB. SuperAudit's audit issue taxonomy should align with these Lighthouse 13 names — any audit report comparing SuperAudit output to a Screaming Frog crawl will otherwise show label mismatches.

Google Search Console 2026: AI filter config and API v2 expansion

Two GSC updates together: the Performance report gained an AI-powered configuration mode — describe your analysis in plain language and it maps to filters, comparisons, and date ranges automatically. Separately, Google is expanding Search Console API v2 to cover Core Web Vitals, video, and structured data metrics. For Pulse's GSC integration, API v2 coverage means automated CWV regression monitoring can be tied directly to performance changes without manual Console access.

Core Web Vitals 2026: INP threshold tightens to 150ms, new SVT metric

Google's 2026 CWV update moves the INP "good" threshold from 200ms to 150ms and introduces Smooth Visual Transitions (SVT) — a new metric penalizing janky page-load renders, now treated as a hard ranking factor rather than a soft signal. SuperAudit's audit output should flag INP above 150ms as failing the 2026 threshold. SVT is a candidate for the next SuperAudit release cycle since it won't appear in any existing Lighthouse audit pass/fail output.

MCP ecosystem surpasses 8,600 servers — Salesforce, Amplitude, Asana live

The MCP server directory lists over 8,600 servers (up from ~1,400 six months ago). The biggest single expansion: Salesforce, Amplitude, Asana, Box, Clay, and Hex launched official remote MCP servers simultaneously. Supermetrics also shipped an MCP server connecting AI agents to live marketing performance data — worth evaluating as a complement to Pulse's BigQuery pipeline for clients who use Supermetrics for reporting.

Google Business Profile: scheduled posts, bulk multi-location publishing, AI Q&A

GBP's posts API now supports scheduled publishing (set date and time, auto-publishes), single posts pushed across all locations at once for multi-branch accounts, and an AI-generated Q&A system that auto-answers common customer questions from business data and reviews. For GBP Hub, the scheduled post API endpoint and bulk multi-location publishing are the two highest-leverage new capabilities to integrate — both reduce the time account managers spend managing GBP manually per location.

Google AI Overviews: organic traffic impact and new GSC filter

Google AI Overviews are now appearing across a significantly wider range of queries — including local, product, and informational searches that previously drove reliable organic clicks. Search Console added a dedicated AI Overviews filter in the Performance report showing impressions and clicks attributed to AI Overview-adjacent results separately from standard blue-link positions. For Pulse, this filter is the clearest signal to date for identifying which client pages are being cannibalized by SGE — worth surfacing as a first-class metric in the GSC integration.

Cloudflare Workers AI: edge inference with fine-tuning and LoRA support

Cloudflare Workers AI now supports LoRA fine-tuning — upload adapter weights and run a customized model from the same edge network serving NKP client sites. Latency runs under 50ms for most inference calls from Cloudflare's 300+ PoPs, and the API is compatible with the OpenAI SDK interface. For Cloudflare Pages deployments, this opens the door to lightweight AI features (on-page Q&A, content classification) without a separate hosted model endpoint — the same Cloudflare account already in use is sufficient.

Google Ads API v20: performance metrics expansion and AI asset generation

Google Ads API v20 adds granular performance breakdown fields — search term impression share by device, asset-level conversion data, and campaign-level quality score signals — alongside a new endpoint for programmatically generating and submitting AI-generated responsive search ad assets. For any future Pulse integration tracking paid search alongside organic, v20 is the version to target: the asset-level conversion data resolves the longstanding gap between RSA performance and keyword-level attribution.

Looker Studio: native BigQuery ML scoring and scheduled refresh via Connected Sheets

![]()

Looker Studio now supports live BigQuery ML model scoring directly in reports — a BQML model result can be joined to a Looker Studio data source and refreshed on schedule without an intermediate Scheduled Query or export step. Connected Sheets gained a Looker Studio sync mode so BigQuery data surfaces in both tools from a single extraction. For the per-domain Looker Studio embed roadmap item in Pulse, this eliminates the manual BigQuery → Sheets → Studio pipeline that currently requires a cron trigger for each client refresh.

PageSpeed Insights 2026: INP percentile breakdown and SVT field data beta

The PageSpeed Insights API now returns INP at p75, p90, and p99 separately in the field data response — previously only p75 was available, masking tail latency on high-traffic pages. Smooth Visual Transitions (SVT) entered field data beta for Chrome users: SVT scores are now visible in the CrUX dataset alongside LCP and INP. SuperAudit's PSI integration should be updated to surface p90 INP for pages where p75 passes but p90 fails the new 150ms threshold — this is exactly the class of issues that show up in audits as "borderline good" and then fail in production under real load.